NPS vs Time-to-depth: What you should look at when analyzing with Stockfish

Last April, Mr. John Hartman, the digital editor for uschess.org, tweeted to us that his "old quad-core I7, using the latest dev Stockfish," has a better time-to-depth speedup than the 55,000,000-NPS Chessify cloud engine. For those new to the term, a cloud engine, in this case, cloud Stockfish, is the same Stockfish engine run on powerful cloud (internet) servers, which, unlike average local computers, can afford exceedingly high speed and multiple threading. For instance, Stockfish 15 using four CPUs on local computer analyses around 5m nodes (positions) per second, while Stockfish 15 working on Chessify’s 300,000 kN/s cloud server analyzes around 60 times more positions per second.

So the problem was that our cloud Stockfish engine, which worked on a faster server, reached the same and sometimes even lower depth than the local one. This was taken as a problem on our part since it's widely believed that faster hardware (or cloud server) must get you faster to the same depth. It took some extensive research to understand and respond to Mr. Hartman’s concern fully, but we are glad to have been given the incentive to explore this topic more thoroughly and share our findings with all chess lovers.

It comes as no surprise that while choosing between two engines, most chess players will pick the one which reaches a higher depth in a given time. This is because higher depth is usually associated with higher accuracy of a suggested move or variation. Lower depth speedup, on the other hand, is often seen as a sign of a weaker engine. But is this opinion even true?

The top grandmasters never rely on the depth alone, for they know that the speed at which an engine reaches a certain depth (known as time-to-depth) is not a reliable measurement for the accuracy of its analysis.

There's a far more significant value when it comes to measuring the analysis accuracy. This value is known as NPS - nodes per second, and it reflects the number of positions the engine analyzes in one second. We've prepared illustrative examples and statistics below to show you why NPS is so crucial. And regardless of whether you're a GM or not, if you use chess engines for your analysis, you have a good reason to learn the meanings and values of the numbers your engines show.

Lower time to depth for higher core Stockfish

First and foremost, it is a fact that a higher-CPU-core Stockfish can take longer to reach the same depth than a lower one. Of course, when the difference between the numbers of CPUs used is too big, then the time-to-depth speedup of the higher-core SF will also be higher (although not as high as one may expect from a much faster engine). But with fewer CPUs working, the higher-core engine has a lower depth speed.

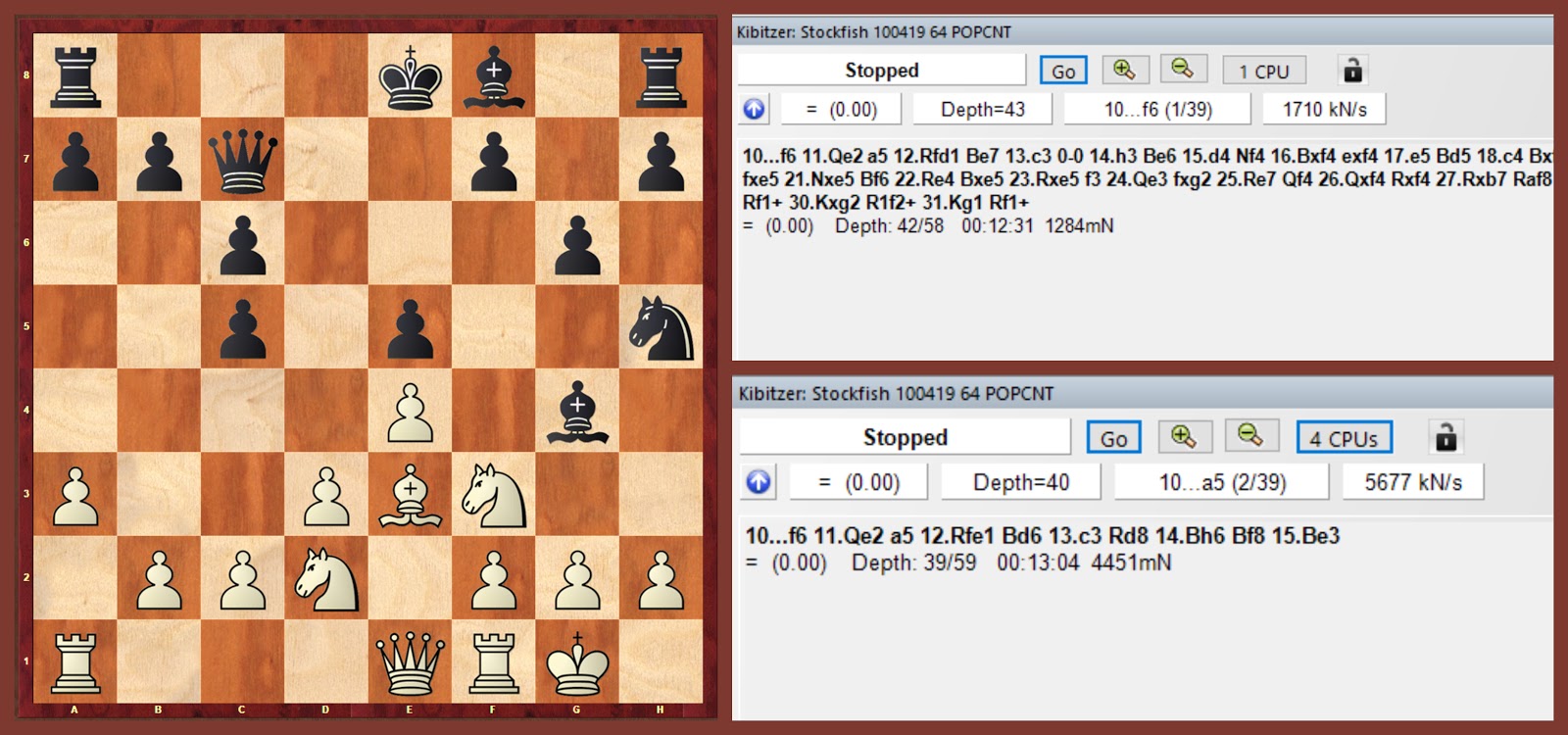

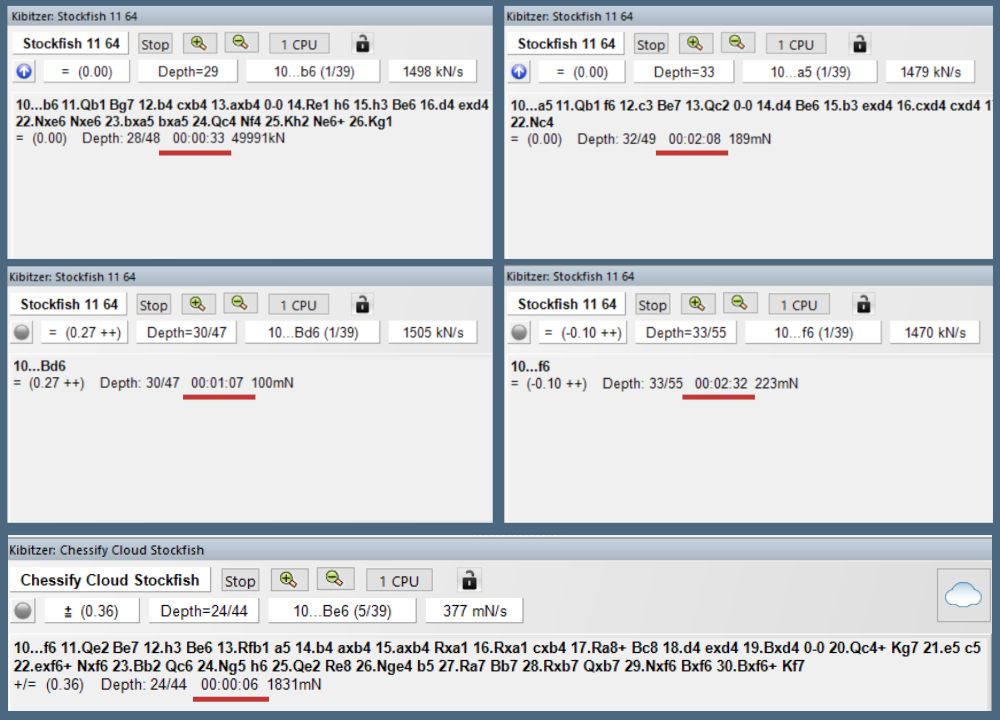

For example, Stockfish working on 50 CPUs will analyze around 50 times more positions and will still reach higher depth much faster than Stockfish working on 1 or 4 CPUs. However, here’s what happens when Stockfish 11 is run on 1 and 4 four CPUs on a local computer.

Move 10 of the game Aronian vs. Carlsen, 2019 Norway Chess. Note that the engines were run separately.

Despite analyzing a minute longer, Stockfish 11 working on 4 CPUs reaches the same depth as the SF working on a single CPU. Of course, the same engine cannot weaken when given better core support, so what does happen here? If we put aside the depth number, we can see that the other values, nodes number (4526m) and nodes per second (4944k), actually increased about 4 times. These values show the number of positions the engine analyzed, which means that Stockfish prioritizes broader position search and higher search speed over time-to-depth speedup.

Lazy SMP and better search speed

Without going too deep into how chess engines work, we want to focus on an algorithm called Lazy SMP which causes this time-to-depth slowdown for Stockfish, granting a range of benefits instead.

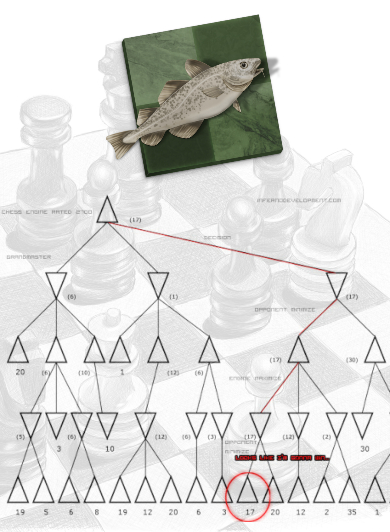

So what is the Lazy SMP? With this algorithm in play, the same root position is searched by multiple threads (each CPU can afford a maximum of two threads) which are launched with different depths. This approach significantly widens the search tree avoiding the risks of ignoring essential variations which may seem bad enough to be cut off by the engine at low depths. The parallel search approach of Lazy SMP scales well in nodes per second but instead seems worse in speedup concerning time-to-depth as compared to YBW, the algorithm which Stockfish used previously.

It was in January 2016 when Stockfish switched to the Lazy SMP algorithm, probably the most critical change between SF 6 and SF 7. As a consequence, the new SF took longer to reach the same search depth as compared to SF 6, since the new algorithm search prioritized a wider search tree and had to process more positions (nodes). With a bigger number of CPU cores, the engine’s capability of analyzing positions enhances, and, consequently, the time-to-depth speedup decreases further. The compensation is the remarkable increase in the search speed, nodes-per-second (NPS), which proves to be more essential for the result of Stockfish analysis.

Taking the same Aronian vs. Carlsen game presented above, we can see how higher NPS Stockfish (350,000 kN/s) instantly finds the right move while local (lower-NPS) Stockfish 11 struggles for the first few minutes to reach the same result. Those who followed the games probably remember that as both the commentators and Carlsen himself admitted after the game, the right setup for black was to start with 10...f6 to be prepared for the complex variations after 11. Qb1 followed by b4, which would be more advantageous for white if the black pawn was on f7. When you run local Stockfish 11 on one CPU, however, it takes more than two minutes to start focusing on the right move.

As Stockfish gets better core support (in this case via a cloud server), the search tree widens, and the engine finds the f6 variation much faster. Importantly, the depth does not increase, which shows its comparably less effect on the analysis result.

Stronger play by higher-NPS engines

Besides ensuring better analysis and evaluation, the increased search speed (NPS) also proves to be essential for the playing strength of an engine. After switching to Lazy SMP at the beginning of 2016, Stockfish has dominated the Top Chess Engines Championship (TCEC) which featured all the best chess engines in the world. During nine seasons, Stockfish won six of the eight superfinals it qualified for. When Houdini did the same in November 2016 (see the timeline of updates), it went on to qualify for the TCEC superfinal three times and even won the 10th season in a 100-game match versus Komodo.

A Stockfish match

In order to prove how important the NPS index is for the engine’s playing strength, we've conducted an experimental tournament of Stockfish 10 running on 1, 4, and 50 CPU cores. The time control was long enough not to affect the result, while the three engines were commanded to make a move once they reach a depth of 20. Given that all the moves were done on the same depth, the tournament results efficiently demonstrate how unreliable the depth value can be.

The tournament consisted of three 50-game matches: SF1 vs. SF4, SF4 vs. SF50, and SF1 vs. SF50. Here are the final standings.

Discussion

Stockfish working on 50 CPU cores completely dominated the field winning 54 games and remaining undefeated throughout the tournament. However, the confrontation between 1 and 4-CPU Stockfish engines was the real concern. Given that all the moves were done on depth 20, it is remarkable how Stockfish reinforced by a 4X wider search could perform 24% better finishing the match on +12. The one loss of it, on the other hand, suggests that this analysis approach is not perfect, but the fact that two of the best engines in the world, Stockfish, and Houdini work with this algorithm says much about its effectiveness.

Important Points

- Since the beginning of 2016, Stockfish has been working with a new algorithm called Lazy SMP.

- Lazy SMP increased Stockfish’s search speed but slowed down its time-to-depth speedup.

- On a fixed depth with the same Stockfish engine, two different hardware configurations give different evaluations.

- The playing strength of both Stockfish and Houdini significantly increased after their switch to Lazy SMP.

- Given that today’s analyzing engines are predominantly used for exploring opening and finding novelties, the broader search, which decreases the risks of ignoring critical moves, is more useful than a higher time-to-depth speed.

Here are some useful links that will help you dig deeper into this topic:

- What is Depth in Chess?

- NPS - What are the "Nodes per Second" in Chess Engine Analysis

- Lazy SMP and Work Sharing

- A complete chess engine parallelized using Lazy SMP

- Stockfish 7 progress

- Time to depth concerns

- What's new in Stockfish 7

Special thanks to Mr. Han Schut for his valuable feedback on this blog.